You used to love learning. Then the method became the problem.

You used to love learning. Somewhere between mandatory workplace certifications and three abandoned online courses, that feeling disappeared. The problem probably isn’t your motivation. It’s a mismatch between how you’re trying to learn and how your brain actually processes information.

Learning methods compared means systematically evaluating study and skill-acquisition techniques – including practice testing, spaced repetition, highlighting, interleaving, and curiosity-driven exploration – against criteria like effectiveness, time investment, and retention outcomes, so you can select approaches that match your situation rather than defaulting to familiar but ineffective habits.

In their landmark 2013 review, psychologist John Dunlosky and colleagues at Kent State University analyzed 10 common study techniques across decades of learning research. The finding that stopped me cold: the two most popular study strategies – highlighting and rereading – ranked among the least effective methods tested [1]. The gap between what feels productive and what produces results is staggering.

What you will learn

- How six major learning methods stack up on research-backed effectiveness, time cost, and ideal use cases

- Why practice testing and spaced repetition outperform every other technique in the research

- How curiosity-driven and structured learning complement each other instead of competing

- A decision framework (Method-Fit Compass) for matching the right method to your specific learning goal

- How to layer multiple methods into a single practice that actually sticks

Key takeaways

- Practice testing and spaced repetition are rated “high utility” by learning research [1][2]; highlighting and rereading rank lowest despite being most popular.

- Rereading creates fluency (the feeling of knowing) while retrieval practice builds recall (actual knowledge).

- Curiosity-driven and structured methods work best combined, not chosen as either-or.

- The right learning method depends on three variables: goal type (recall vs. transfer), motivation source (intrinsic vs. external), and time horizon.

- A four-phase layering model (scan, encode, retrieve, interleave) matches technique selection to learning progression rather than applying one method throughout.

- Intrinsic motivation returns when learners control the how – even when external requirements fix the what.

Which learning methods are most effective according to research?

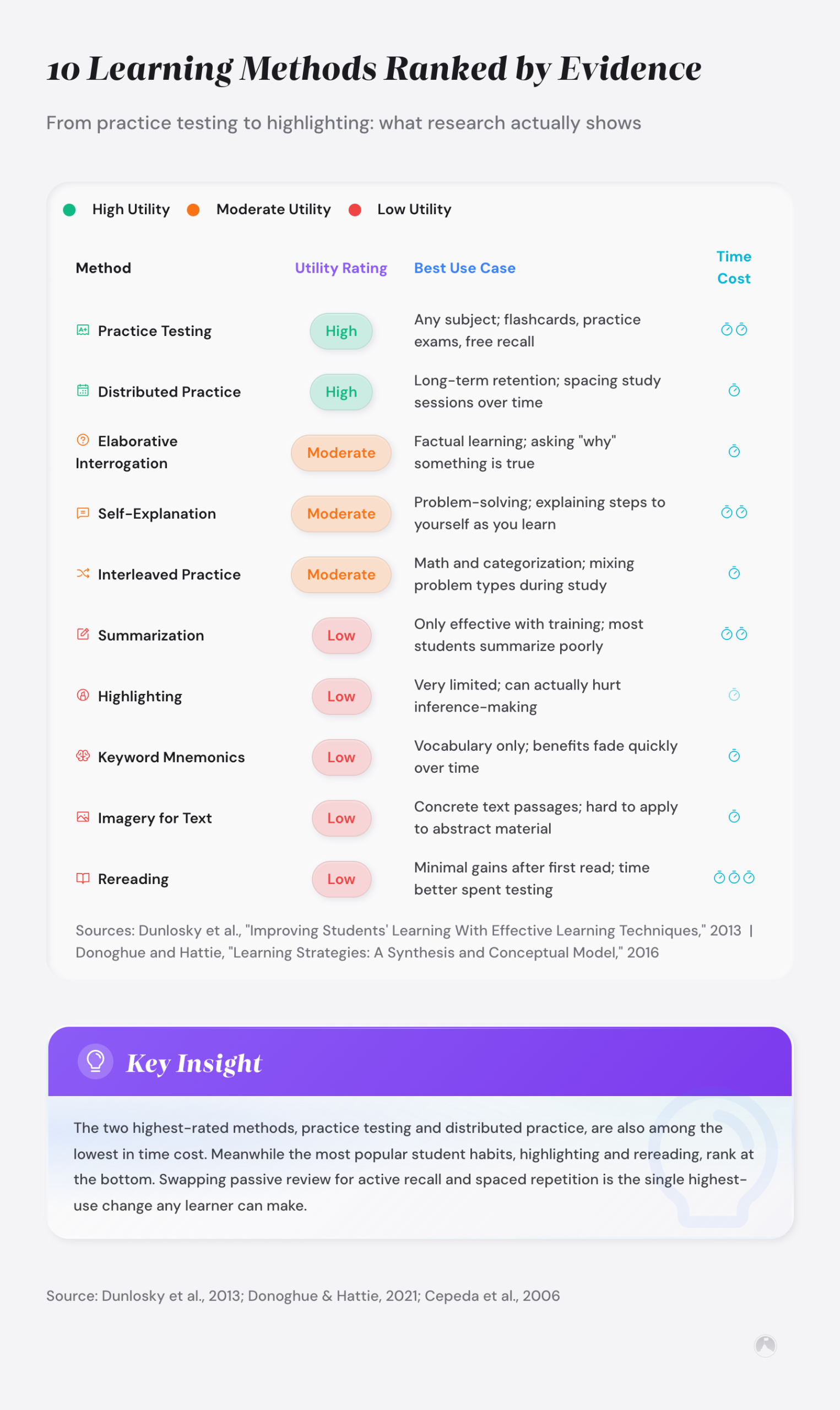

In their landmark 2013 review, Dunlosky and colleagues rated ten common study techniques on a three-tier scale: high, moderate, and low utility [1]. A 2021 meta-analysis by Donoghue and Hattie in Frontiers in Education largely confirmed these rankings and added statistical precision [2]. The table below synthesizes both reviews.

| Method | Research rating | Best for |

|---|---|---|

| Practice testing (self-quizzing) | High | Fact recall, exam prep, certifications |

| Spaced repetition (distributed practice) | High | Long-term retention, language learning |

| Interleaving | Moderate | Problem solving, skill transfer |

| Elaborative interrogation | Moderate | Conceptual understanding |

| Highlighting / underlining | Low | Initial reading (first pass only) |

| Rereading | Low | Familiarity (not deep learning) |

The practical dimension matters as much as the rating.

| Method | Time cost | Key principle |

|---|---|---|

| Practice testing (self-quizzing) | Low to moderate | Retrieval strengthens memory traces |

| Spaced repetition (distributed practice) | Moderate (requires scheduling) | Spacing intervals optimize forgetting curves |

| Interleaving | Low | Mixing topics forces discrimination between concepts |

| Elaborative interrogation | Low | Asking “why” and “how” deepens encoding |

| Highlighting / underlining | Very low | Passive marking without retrieval |

| Rereading | High (diminishing returns) | Recognition confused with recall |

Practice testing and spaced repetition are the only two methods rated “high utility” across both the 2013 and 2021 meta-analyses. Everything else falls into moderate or low tiers. That doesn’t mean the lower-rated methods are useless. It means they work best as supplements, not primary strategies.

Three methods in the table deserve brief definitions. Interleaving is a study strategy that mixes different topics within a single session rather than blocking all practice on one type at a time – forcing the brain to discriminate between concepts, which strengthens transfer. Elaborative interrogation is the practice of asking “why does this work?” for each new concept, deepening encoding by requiring active connection-making. Spaced repetition is a scheduling method that spaces review sessions at expanding intervals – reviewing on day 1, day 3, day 7, day 21 – timed to occur just before the memory would otherwise fade.

Why do most people default to highlighting and rereading? Familiarity bias. When you reread a passage, it creates a feeling of fluency – the brain confuses recognition (I’ve seen this before) with recall (I can retrieve this from memory). Those are different neural processes. Only recall predicts actual performance [1]. Other techniques like the Feynman Technique and summarization fall into moderate or low utility tiers as well – the Feynman Technique works on similar principles to elaborative interrogation (explaining a concept simply forces active processing), but requires extensive training to be effective. If you want to understand the brain science behind this distinction, the research on neuroplasticity and learning science covers the mechanism in depth.

Why do practice testing and spaced repetition outperform other learning methods?

Practice testing works through what cognitive psychologists call the “retrieval practice effect.” Every time you pull information from memory – instead of rereading it – you strengthen the neural pathway to that information [1]. The effort of retrieving, not the ease of reviewing, builds durable knowledge.

“Practice testing was rated as having high utility because it benefits learners of different ages and abilities, has been shown to boost performance across many different criterion tasks, and can be implemented with minimal training.” – Dunlosky et al. [1]

Spaced repetition adds a timing dimension. Instead of cramming material into one session, you review it at expanding intervals. Lyle and colleagues conducted a 2024 meta-analysis of nine introductory STEM courses and found that spacing produced consistent gains in exam performance compared to massed study sessions, with effect sizes that held across different subject areas [4].

But why does spacing work so well? According to Cepeda and colleagues’ analysis of 317 experiments on distributed practice, the optimal inter-study interval increases as the retention interval grows – reviewing right before that knowledge fades, rather than while it’s fresh, forces the brain to rebuild the memory trace more substantially [8]. Spaced repetition targets the sweet spot in memory decay where retrieval effort is high but failure is rare – and that specific difficulty zone is where long-term retention lives.

Practice testing and spaced repetition compound when combined: retrieval testing strengthens the memory trace, and spaced intervals prevent that trace from decaying. This combination addresses both the encoding problem (retrieval strengthens traces) and the retention problem (spacing prevents decay). If you’re looking for tools to automate this combination, our guide to the best learning apps covers the options worth considering.

Is curiosity-driven learning better than structured methods?

Here’s where the standard comparison tables miss something. The methods above assume you’ve already decided what to study. But for most adult learners, the deeper question is whether to follow a structured path or let curiosity guide the way.

| Dimension | Curiosity-driven learning | Structured learning |

|---|---|---|

| Motivation source | Intrinsic interest and questions | External goals, curricula, deadlines |

| Retention | Higher for interest-aligned material | Higher for systematically reviewed material |

| Depth vs. breadth | Tends toward breadth (many topics) | Tends toward depth (one topic at a time) |

| Risk | Scattered exploration without mastery | Boredom and disengagement |

| Best for | Exploration, creative connections, passion-based learning | Credentialing, sequential skill building, exam prep |

| Biggest weakness | Lacks built-in progress tracking | Kills intrinsic motivation when poorly designed |

Psychologists Richard Ryan and Edward Deci, whose self-determination theory research has influenced three decades of motivational science, show that autonomy, competence, and relatedness drive sustained learning motivation [3]. Cultivating curiosity through self-directed exploration satisfies the autonomy need. Structured learning satisfies the competence need through clear milestones. Neither covers all three alone.

The most effective learners don’t choose between curiosity and structure – they layer both, using interest to fuel engagement and systems to build depth.

The Method-Fit Compass: matching technique to situation

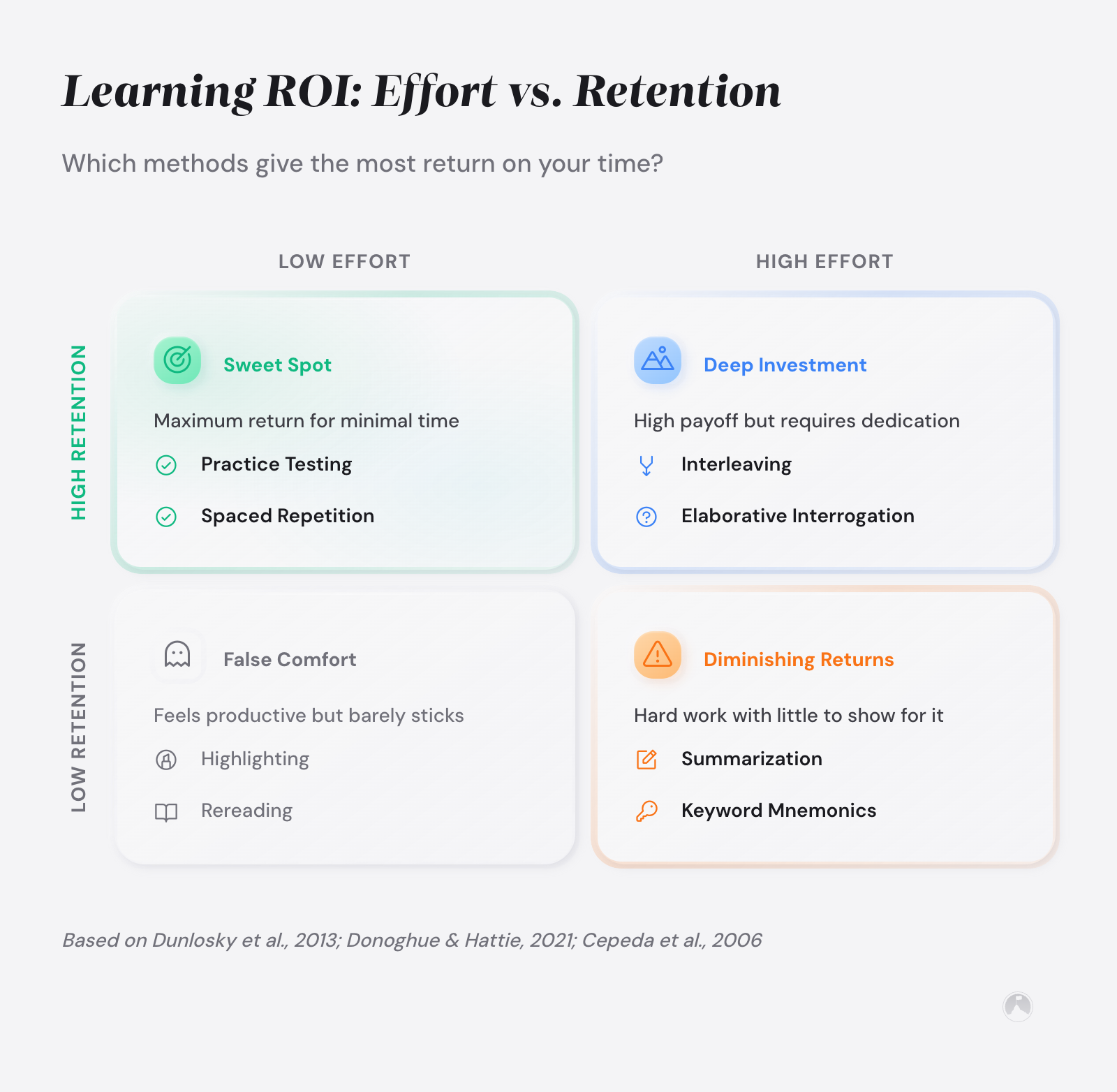

Here’s the real problem with method comparisons: they stop at the ranking. They tell you which methods scored highest and leave you to figure out the rest. But a method rated “high utility” in controlled studies might still be wrong for your situation.

The Method-Fit Compass is a framework we built from the research patterns across Dunlosky, Ryan and Deci, and the spacing literature. It uses three dimensions to match techniques to learners instead of ranking them in isolation. The matching approach works because a method’s effectiveness is not a fixed property – it changes based on the learner’s goal type, motivation state, and time horizon, making a universal ranking less useful than a situational map:

- Goal type: Are you learning for recall (facts, procedures, credentials) or for transfer (applying knowledge in new contexts, creative problem solving)?

- Motivation source: Is your learning driven by intrinsic curiosity or by an external requirement?

- Time horizon: Do you need results in days (exam next week), months (career transition), or years (lifelong mastery)?

| Your situation | Best primary method | Best supporting method |

|---|---|---|

| Recall goal + external motivation + short time horizon | Practice testing | Spaced repetition |

| Transfer goal + intrinsic motivation + long time horizon | Interleaving + curiosity-driven exploration | Elaborative interrogation |

| Recall goal + intrinsic motivation + medium time horizon | Spaced repetition | Curiosity-led topic selection |

| Transfer goal + external motivation + medium time horizon | Elaborative interrogation | Practice testing for key concepts |

Someone preparing for a medical board exam and someone exploring woodworking out of curiosity need different approaches. Yet practice testing scores highest in research for both situations. That’s why rankings alone aren’t enough. The Method-Fit Compass treats learning as a matching problem, not a ranking problem – the best method depends on variables that differ for every learner.

How should you layer learning methods for best results?

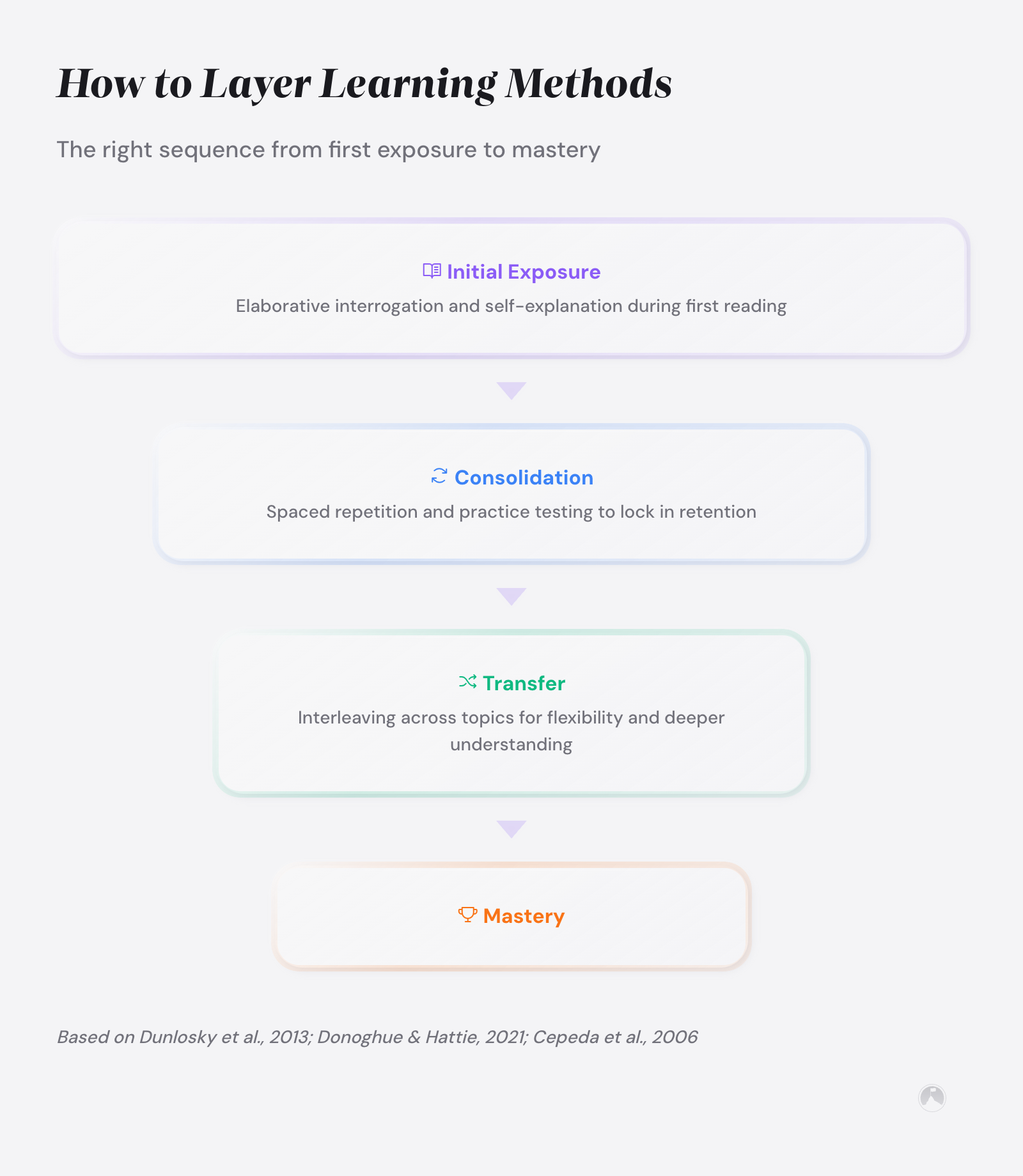

Research points to layering, not switching. Instead of picking one technique and sticking with it, effective self-directed education stacks methods based on the learning phase you’re in.

Phase 1: Curiosity scan. Follow your interest to a topic and read broadly. Use highlighting and rereading here (yes, the low-utility methods) – you’re not memorizing yet. You’re finding the questions worth pursuing. Learning from curiosity earns its keep at this stage. If you’re learning Python, this is the phase where you browse tutorials, skim documentation, and figure out which aspects of the language genuinely interest you.

Phase 2: Active encoding. Once you’ve identified what matters, switch to elaborative interrogation. Ask “why does this work?” and “how does this connect to what I already know?” for every key concept. Interest-led learning meets inquiry-based education here. For that Python example, Phase 2 is where you stop browsing and start asking why list comprehensions work differently from loops.

Phase 3: Retrieval and spacing. Now apply practice testing and spaced repetition. Create flashcards using tools like Anki or RemNote, take self-quizzes, or teach the concept to someone else. Space your reviews using expanding intervals. A landmark study by Karpicke and Roediger demonstrated that repeated testing outperformed repeated studying by a dramatic margin – students who tested themselves on vocabulary retained far more one week later than students who spent the same time rereading [10]. Retrieval effort is what drives retention, not time spent reviewing.

Phase 4: Interleaved application. Mix the new material with other subjects you’re studying. Apply it to different problems. This is where transfer happens – and it’s the phase most self-guided learning systems skip entirely. For strategies that connect retrieval and creativity at this stage, explore creative thinking techniques that push interleaving further.

“Interleaving different types of problems during practice leads to better long-term learning than blocking practice on one type at a time, even though blocked practice produces higher performance during training.” – Dunlosky et al. [1]

This four-phase approach solves the complaint most learners make about method comparisons: they don’t tell you when to use which technique. The phases provide that structure. Start with exploration, narrow to what matters, practice retrieval, then apply to new contexts. The right method at the wrong phase is the wrong method. The four-phase model assumes something most productivity content ignores: not everyone has the same working memory, attention span, or available time. If you want to build a complete personal learning system, this layered approach gives you the skeleton.

Learning methods for ADHD brains and limited time

Standard learning comparisons assume a neurotypical brain with blocks of uninterrupted time. That’s not most people’s reality.

If you have ADHD, Phase 1 (curiosity scan) often comes more naturally than other phases. Research on ADHD neurobiology shows that the dopamine systems driving attention also drive heightened novelty-seeking [5]. Phase 3 (repetition and spacing) is the challenge because consistency requires structure you don’t naturally generate. For strategies specific to ADHD learning patterns, our guide on creative learning ADHD strategies goes deeper.

Two adaptations that work:

- Shrink the spacing intervals. ADHD research consistently supports shorter, more frequent review intervals rather than longer spaced gaps, because working memory and attention management are the limiting factors, not encoding capacity [5]. Spaced repetition apps remove the scheduling burden – a systematic review found that automated reminder systems reduced cognitive load from scheduling decisions for ADHD learners specifically [7].

- Use interleaving as novelty. A meta-analysis of executive function in ADHD found that while ADHD brains struggle with sustained attention on single tasks, they show strengths in rapid task-switching [9]. Interleaving – mixing different topics in one learning session – uses that strength while compensating for sustained-attention weakness.

For parents with fragmented schedules, the four-phase model works in micro-sessions. Because learning success depends on retrieval attempts and spacing rather than continuous study time [1][4], Phase 1 can happen during commutes, Phase 2 during lunch, Phase 3 in five-minute gaps, and Phase 4 when you apply the material at work. For spaced and retrieval-based learning, consistency across multiple short sessions matters more than duration in a single long session – a finding replicated across both lab and classroom studies.

Ramon’s take

Highlighting is the learning equivalent of buying running shoes. It feels productive, nothing moves. The research on this has been out for years and we’re all still sitting there with our yellow markers like it’s 1997.

Conclusion: learning methods matched to reality

When learning methods are compared against research, two techniques stand out: practice testing and spaced repetition. But rankings alone don’t solve what most adults face – finding an approach that fits their goals, motivation, and available time. The Method-Fit Compass reframes the question from “which method is best?” to “which method fits this situation?” That reframe changes how you approach every new skill, certification, or curiosity you pursue.

The learners who retain the most aren’t the ones who found the perfect method. They’re the ones who matched the right method to the right moment and stayed long enough to reach Phase 3. For a broader view, see the creativity and learning strategies guide. The learners who stay curious longest are the ones who stopped asking “which method is best?” and started asking “which method is right for this?”

Next 10 minutes

- Identify one thing you’re currently trying to learn and determine your goal type (recall or transfer), motivation source (intrinsic or external), and time horizon.

- Use the Method-Fit Compass table to select your primary and supporting methods for that one topic.

This week

- Run three practice-testing sessions on material you’re studying and compare retention versus your previous approach.

- Try one interleaved study session where you mix two different topics in the same sitting.

- Set up a basic spaced repetition schedule for your most important learning goal – even just calendar reminders to start.

There is more to explore

For deeper dives into how learning connects to creativity and skill development, explore our guides on learning new skills quickly and cultivating a growth mindset for lifelong learning.

Related articles in this guide

Frequently asked questions

What is the difference between curiosity-driven and structured learning?

Curiosity-driven learning follows your intrinsic interest without a predetermined path, producing high engagement but risking scattered focus. Structured learning follows a fixed curriculum with milestones, producing systematic progress but risking disengagement. Research suggests combining both approaches yields the strongest outcomes across motivation and retention [3].

Can curiosity-driven learning work for professional development?

Curiosity-driven learning works well for professional development when paired with goal alignment. The Method-Fit Compass connects intrinsic interests to career-relevant skill building by matching exploration phases with retrieval phases. Professionals who identify overlaps between personal curiosity and industry needs often report deeper skill acquisition than those following mandatory training alone.

How do you prevent curiosity-driven learning from becoming unfocused?

Set a rule: explore broadly in Phase 1 (curiosity scan), but commit to Phases 2-4 (encoding, retrieval, application) for at least one topic before moving on. The four-phase model provides built-in checkpoints that prevent endless exploration without depth. Tracking which phase you reach for each topic reveals whether you are building skills or collecting interests.

Is spaced repetition better than practice testing?

Spaced repetition and practice testing address different aspects of memory. Practice testing strengthens the retrieval pathway, making information easier to recall. Spaced repetition optimizes the timing of that retrieval to prevent decay. The two methods are most effective when layered together, with practice testing providing the retrieval effort and spacing providing the optimal review schedule. As a practical starting point, if you have limited time, prioritize practice testing first – the retrieval effect is faster to implement and produces gains within a single session. Add spaced scheduling once the testing habit is established.

How do you track progress with self-directed education?

Create milestone markers tied to observable outputs rather than time spent. For recall-oriented learning, track quiz scores over time. For transfer-oriented learning, track how often you apply the material in new contexts. The Method-Fit Compass time horizon dimension helps set realistic checkpoints: weekly for short horizons, monthly for medium, quarterly for long-term goals.

What happens when curiosity does not align with career goals?

Misalignment is less common than it appears. Most professional fields contain sub-topics that connect to personal interests if you look closely enough. Start by listing three aspects of your required learning that genuinely puzzle or surprise you. Those points of genuine surprise are where intrinsic motivation and professional development overlap naturally.

References

[1] Dunlosky, J., Rawson, K.A., Marsh, E.J., Nathan, M.J., and Willingham, D.T. “Improving Students’ Learning With Effective Learning Techniques: Promising Directions From Cognitive and Educational Psychology.” Psychological Science in the Public Interest, vol. 14, no. 1, 2013, pp. 4-58. https://doi.org/10.1177/1529100612453266

[2] Donoghue, G.M., and Hattie, J.A.C. “A Meta-Analysis of Ten Learning Techniques.” Frontiers in Education, vol. 6, article 581216, 2021. https://doi.org/10.3389/feduc.2021.581216

[3] Ryan, R.M., and Deci, E.L. “Self-Determination Theory and the Facilitation of Intrinsic Motivation, Social Development, and Well-Being.” American Psychologist, vol. 55, no. 1, 2000, pp. 68-78. https://doi.org/10.1037/0003-066X.55.1.68

[4] Lyle, K.B., Bego, C.R., Hopkins, R.F., Hieb, J.L., and Ralston, P.A.S. “Single-Paper Meta-Analyses of the Effects of Spaced Retrieval Practice in Nine Introductory STEM Courses.” International Journal of STEM Education, vol. 11, no. 1, 2024. https://doi.org/10.1186/s40594-024-00468-5

[5] Thome, J., Ehlis, A.-C., Fallgatter, A., Krauel, K., Lange, K.M., Peukmann, U., and Riedel, O. “Attention-Deficit/Hyperactivity Disorder (ADHD): From Behavior to Biology.” Journal of Neural Transmission, vol. 119, no. 10, 2012, pp. 1221-1234. https://doi.org/10.1007/s00702-012-0879-7

[7] Graham, R.J., Tinkoff, R.A., and Leventer, R.J. “Digital Tools for ADHD and Neurodevelopmental Conditions: A Systematic Review.” Journal of Developmental and Behavioral Pediatrics, vol. 42, no. 4, 2021, pp. 308-319. https://doi.org/10.1097/DBP.0000000000000928

[8] Cepeda, N.J., Pashler, H., Vul, E., Wixted, J.T., and Rohrer, D. “Distributed Practice in Verbal Recall Tasks: A Review and Quantitative Synthesis.” Psychological Bulletin, vol. 132, no. 3, 2006, pp. 354-380. https://doi.org/10.1037/0033-2909.132.3.354

[9] Willcutt, E.G., Doyle, A.E., Nigg, J.T., Faraone, S.V., and Pennington, B.F. “Validity of the Executive Function Theory of Attention-Deficit/Hyperactivity Disorder: A Meta-Analytic Review.” Biological Psychiatry, vol. 57, no. 11, 2005, pp. 1336-1346. https://doi.org/10.1016/j.biopsych.2005.02.006

[10] Karpicke, J.D., and Roediger, H.L. “The Critical Importance of Retrieval for Learning.” Science, vol. 319, no. 5865, 2008, pp. 966-968. https://doi.org/10.1126/science.1152408