Good Enough Checker

Research shows the last 20% of effort on most tasks produces less than 5% improvement in outcomes. This free tool gives you a project-specific quality threshold so you know exactly where to stop polishing. Four short inputs, 30 seconds, and you walk away with a calibrated effort gauge, a done checklist, and a research-backed time cap tailored to the work in front of you.

Find the line where your work is done well and not overdone

A research-backed tool that gives perfectionists a project-specific threshold for when to stop polishing and start shipping.

Perfectionism costs more than it returns

Research shows that the last 20% of effort on most tasks produces less than 5% improvement in outcomes. This tool calibrates the exact quality threshold where additional effort yields diminishing returns for your specific project.

4 inputs. 30 seconds. Permission to ship.

What this tool solves

Generic perfectionism advice tells you to “ship it” and “lower your standards.” That phrasing is useless when you are actually in the work, because it gives you no way to tell whether the next edit is still adding value or just quiet over-polishing. The honest question is not whether to lower the bar. It is where the bar for this specific project should sit in the first place.

The Good Enough Checker answers that question in concrete terms. A grant proposal and a Slack update do not warrant the same quality floor, and a tax return and a brainstorming memo do not share a finish line. This tool takes your project type, stakes, audience, and hours already invested, then returns a calibrated threshold plus a checklist of specific done criteria (“readable at a glance,” “acceptable from arm’s length,” “meets the stated brief”) so you can stop negotiating with yourself about when to finish and just check the list.

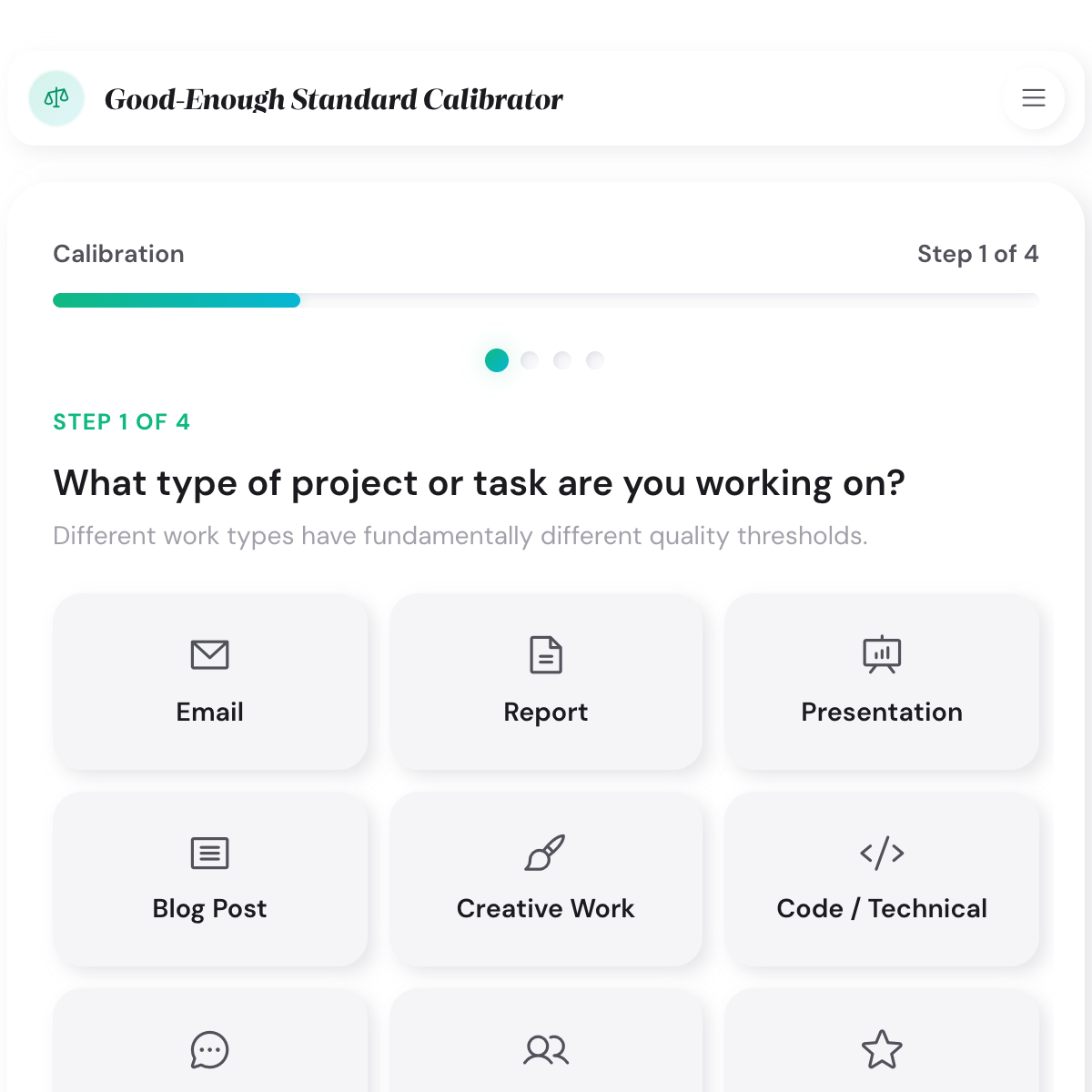

Screenshot walkthrough

Here is a walkthrough using a common case this tool was built for: a medium-stakes written report for an external audience, with roughly 5 to 7 hours of work available. That combination lands on the Balanced Calibrator archetype at around a 70% quality threshold, which is the most common output profile. The screenshots below follow that run end to end.

How the good-enough calibration works

The tool runs a short four-question wizard, then maps your answers onto three components: a threshold percentage, a done checklist, and a time cap. All three numbers are driven by the research on diminishing returns. Below is what each step is actually doing behind the screen.

Project type

Fifteen project types (report, presentation, blog post, code, creative work, resume, tax return, home improvement, and more) each carry a different built-in quality curve. A blog post hits the point of diminishing returns earlier than a tax return, and a client-facing report plateaus differently than a working draft. Picking the right type front-loads the most important adjustment before the stakes and audience inputs refine it further.

Stakes and audience

Stakes (low, medium, high) and audience visibility shift the curve. High stakes with a visible audience push the threshold up, because the cost of an error is higher. Low stakes with a private audience push it down, because polishing further does not pay back. Most perfectionists over-estimate the stakes on everyday tasks, which is why this step usually lowers the threshold rather than raising it.

Hours invested and hours available

The time inputs do two things. They let the tool flag if you are already past the point where additional hours are producing meaningful improvement, and they set a realistic time cap you can enforce. The cap draws on field-specific research (consulting hours for reports, content marketing data for blog posts, career research for resumes) so it reflects what real practitioners actually spend rather than a generic ideal.

The calibrated output

You land on one of three archetypes: Efficient Executor (around 50% effort warranted), Balanced Calibrator (around 70%), or High-Stakes Perfectionist (90% or more). Each archetype comes with a done checklist specific to your project type and a time cap. The checklist is what makes the result usable. “Consistent formatting” and “readable at a glance” are checkable. “It feels done” is not.

The research behind good enough

The core logic behind the tool comes from Herbert Simon, the Nobel laureate who coined the term satisficing in 1956 to describe decision-makers who stop searching when an option is good enough rather than exhausting every possibility. Simon’s research showed that satisficing outperformed optimizing in real-world conditions because the time cost of continued search usually exceeded the marginal gain in outcome quality. The Good Enough Checker takes that logic and applies it to task completion rather than decision-making.

The idea that maximizing backfires draws on Barry Schwartz’s research on maximizers versus satisficers, summarised in his book The Paradox of Choice. Schwartz found that maximizers (people who keep polishing to find the best possible version) report lower satisfaction, higher regret, and more time lost than satisficers who settle for good enough and move on. The project-specific curves in the tool also draw on Pareto-style diminishing returns research and field-specific data: management consulting research on report quality, content marketing data on blog posts, and home improvement satisfaction studies showing project completion predicts satisfaction more strongly than finish quality does.

Who gets the most out of this tool

- Freelancers who lose an hour re-reading a proposal nobody will scrutinize at that depth

- Managers who rewrite the same Slack message four times before hitting send

- Students re-checking an assignment they already know is strong

- Creatives sitting on finished work because one pixel feels off

- Consultants burning billable hours on slide aesthetics the client will never notice

- Writers stuck in edit loop five, six, and seven on a draft that was ready at three

- Perfectionists who want a concrete threshold rather than another pep talk to “just ship it”

- Teams looking for a shared vocabulary for “done” instead of relying on gut feel

Related articles and guides

- Setting Realistic Standards Guide how to set thresholds that drive quality without driving burnout

- Progress Over Perfection Practices habits that keep you shipping when the urge to polish takes over

- Breaking Free From Perfectionism the longer route when the pattern runs deeper than a single project

- Overcoming Perfectionism – The full research-backed guide to perfectionism, including the Standards Audit Method for telling productive standards apart from destructive ones.

- Building Systems That Beat Perfectionism – A four-step framework for building systems that get work shipped, useful when willpower alone keeps failing against the urge to polish.

- Perfectionism and burnout research – What longitudinal studies actually show about how perfectionism drives burnout, the evidence behind capping effort before it costs you.

- Perfectionism for creative professionals – How creatives use the Version 1.0 Framework to finish and ship work instead of holding it back for one more revision.

Related growth tools

- Perfectionism Type Quiz find out which of three research-defined patterns is driving the over-polishing

- Self-Coaching Session structured GROW walkthrough for a decision you keep stalling on

- Growth vs Fixed Mindset Assessment the mindset lens that often sits under the “it has to be perfect” impulse

Frequently asked questions

Run one quick calibration on whatever project is sitting open right now. The threshold you get back is the one you can actually enforce, because it is tied to a checklist and a time cap rather than a feeling about whether the work is ready.