A better way to make high-stakes decisions

A weighted decision matrix turns a messy comparison into a structured, defensible recommendation. Name your options, weight the criteria that matter to you, and the tool ranks every option by score and runs a sensitivity analysis so you know exactly how solid your result is.

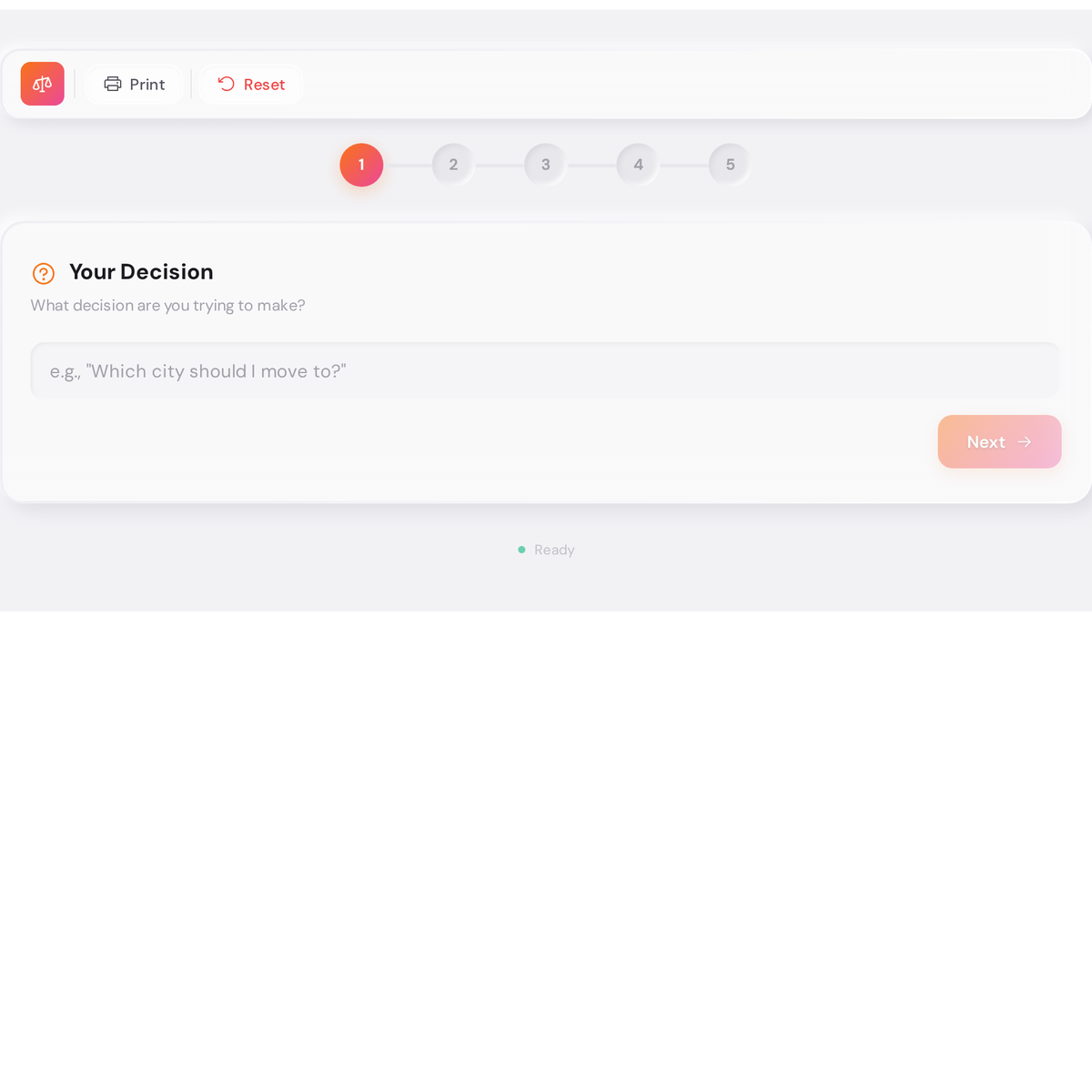

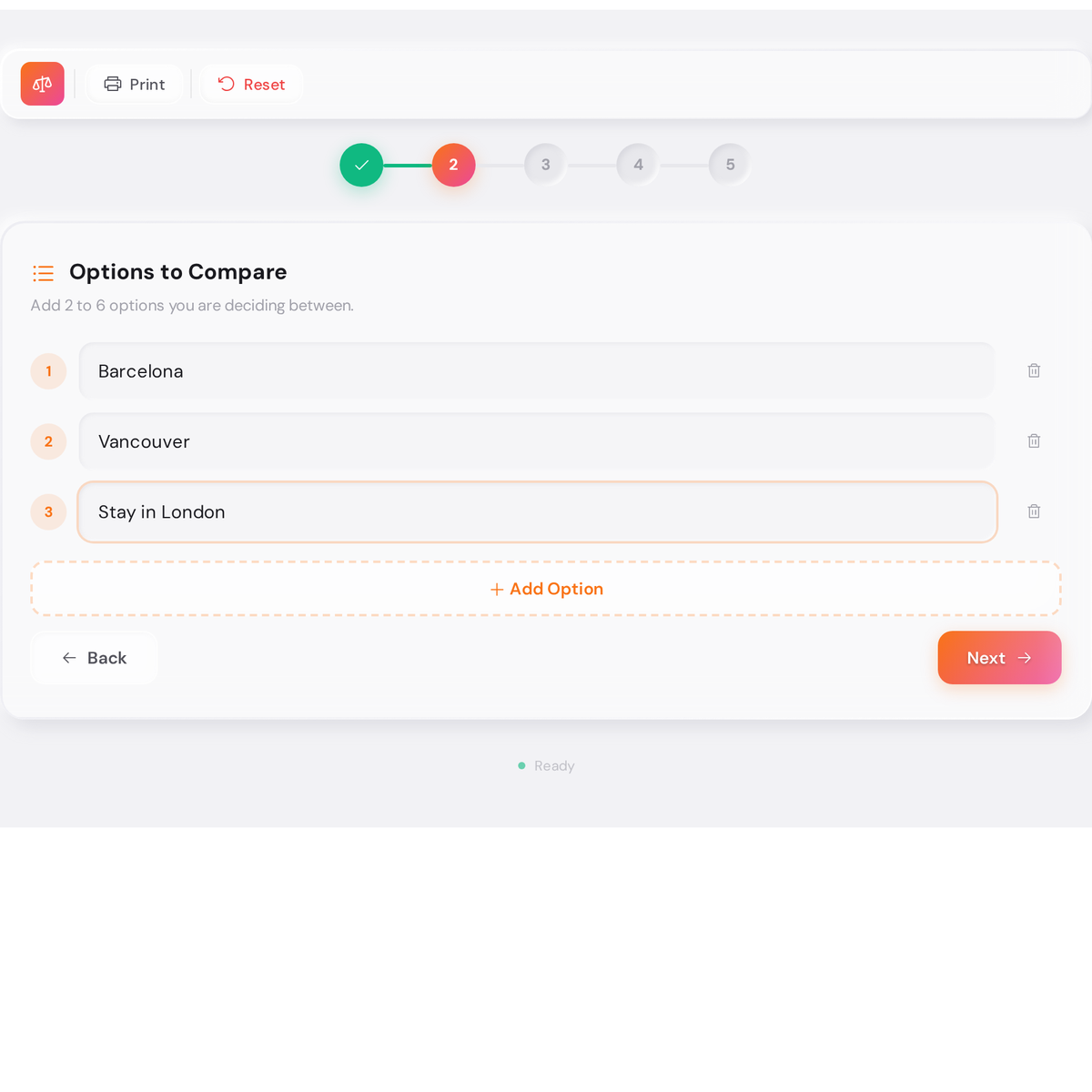

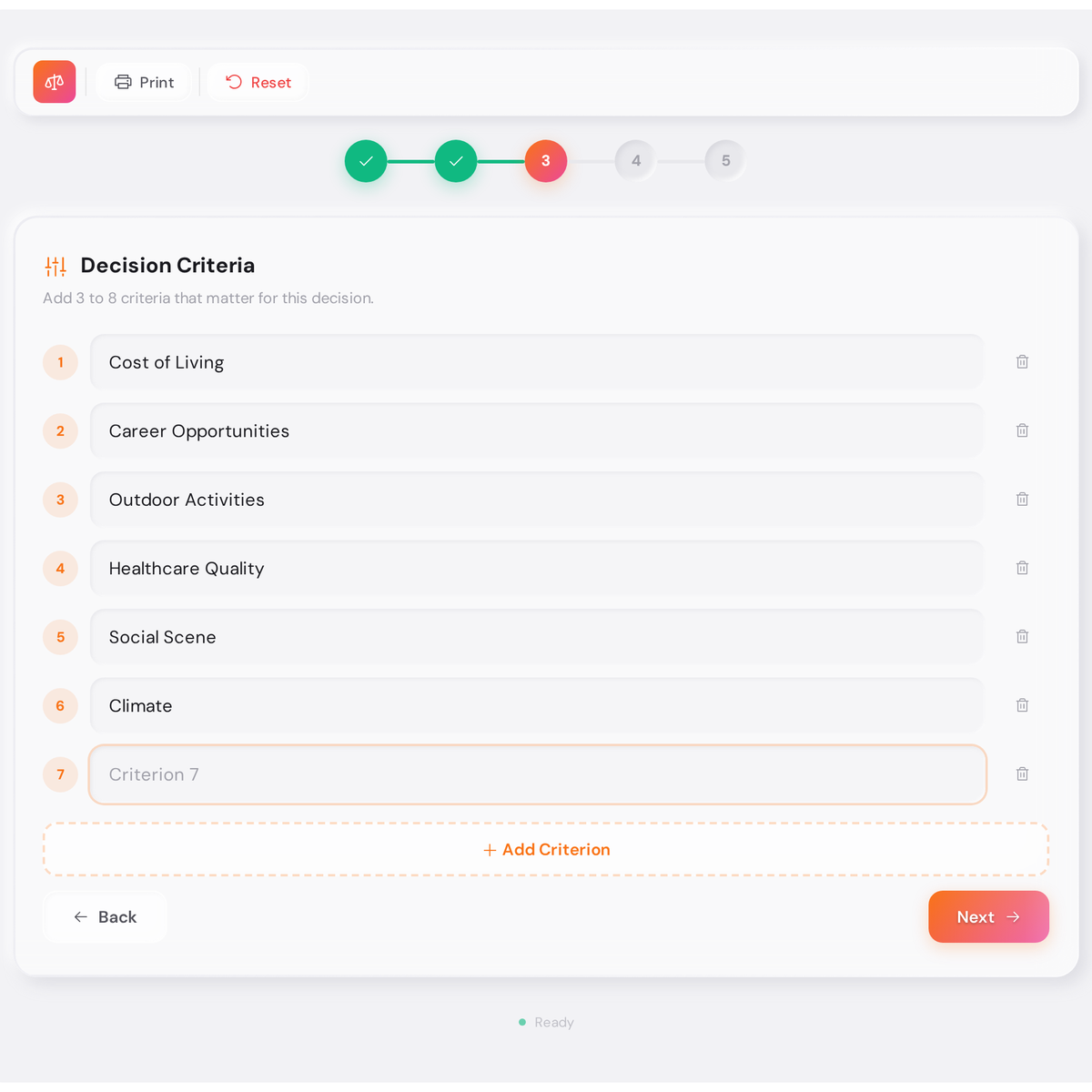

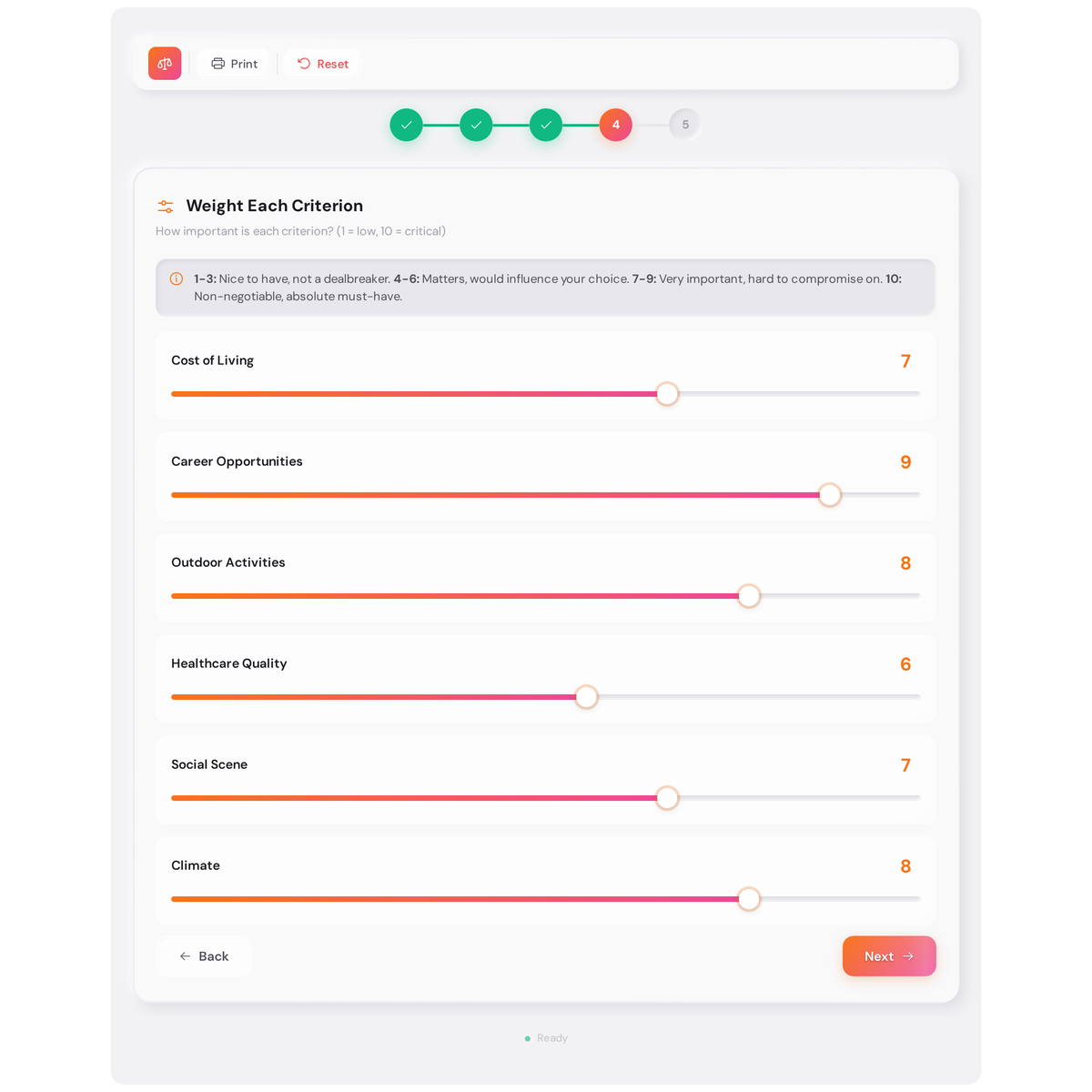

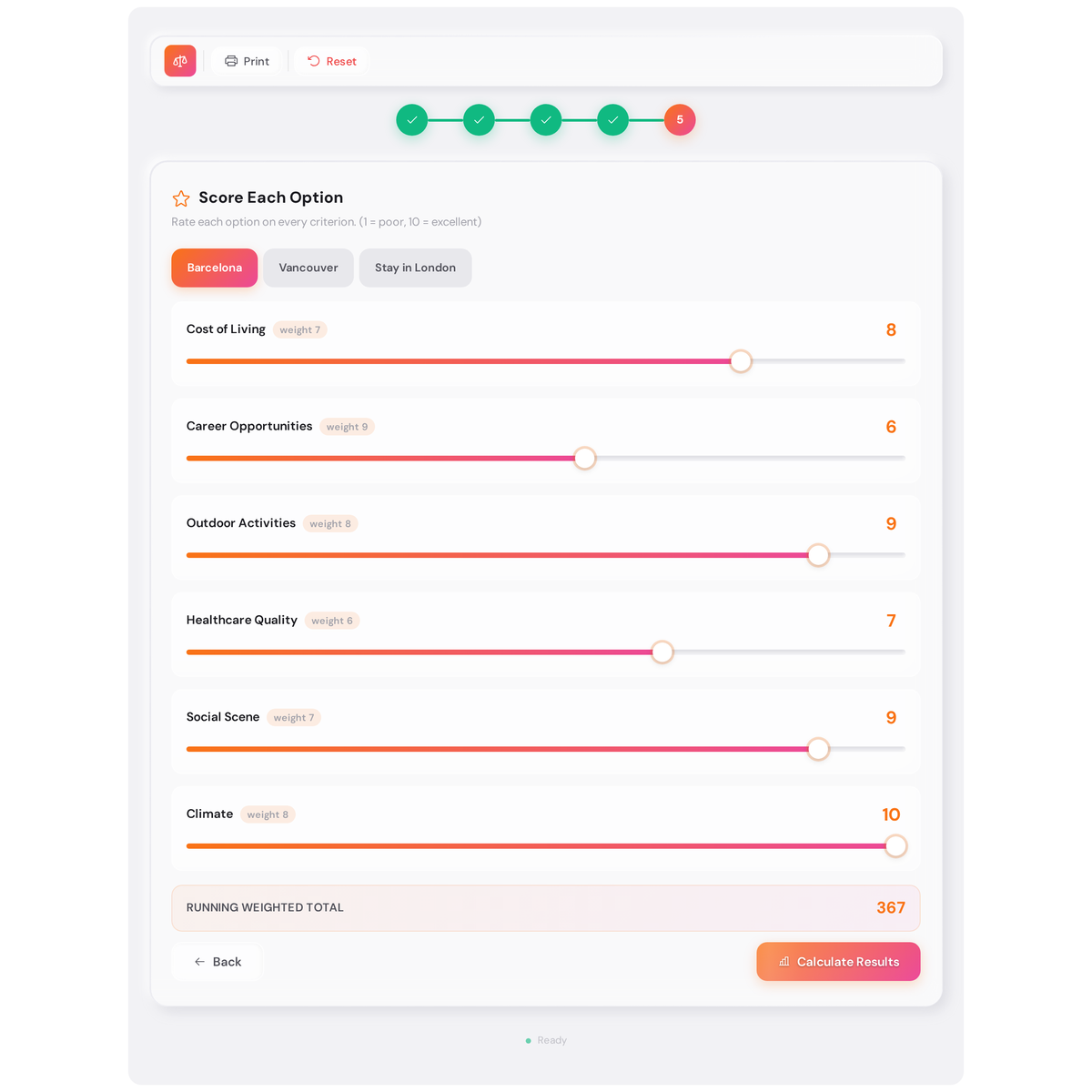

Type your decision question, add your options and criteria, then hit “Calculate Results” and your ranked recommendation appears instantly.

Weighted Decision Matrix Analysis

What this decision making tool actually solves

Most people comparing options bounce between two failure modes. The first is listing pros and cons until they feel vaguely worse than when they started. The second is deferring until the deadline forces a guess. Neither produces a decision you can explain to yourself six months later.

The weighted scoring model fixes this by forcing two honest conversations you’d otherwise skip. First: what do I actually care about here, and by how much? Second: how does each option really perform on those things? Once those questions have concrete answers, the ranking follows automatically. The sensitivity analysis adds a third question that most decision frameworks ignore: how confident should I be? (If swapping one criterion’s weight by two points flips the winner, you’re not actually sure yet.)

The tool is built for decisions where you have three or more real options and two or more criteria that pull in different directions. Career moves, city relocations, software vendor selection, degree programs, investment properties, hiring final candidates. Any situation where “it depends” is the honest answer but a ranked output is what you actually need.

How to use this tool: a screenshot walkthrough

The examples below use a real city comparison decision with Barcelona, Vancouver, and staying in London. Each step shows exactly what you’ll see and what to do next.

How the scoring system works

The math is intentionally transparent so you can sanity-check it. Here is what happens under the hood.

Criteria and weights

Each criterion gets a weight from 1 to 10. The weight represents how much this factor should influence the final outcome relative to the others. The weights are the most important inputs in the whole exercise, so spend time on them. A criterion weighted 9 has nine times the influence of one weighted 1. If that ratio doesn’t feel right when you read it back, adjust it before scoring.

There’s no requirement that weights add up to any specific total. The tool normalises them automatically.

Scores

Each option is scored 1 to 10 on every criterion. A score of 1 means the option performs poorly on that criterion; 10 means it’s excellent. These scores are relative to your option set, not to some external standard. Barcelona scoring a 6 on Career Opportunities just means it’s middle-of-the-road compared to the other cities you’re considering. (That interpretation matters when you read your results.)

Weighted total

For each option, the tool multiplies every criterion score by its weight, then sums the results. The option with the highest weighted total wins. You can see this breakdown in the “Detailed Breakdown” table in the results, which shows the weighted contribution of each criterion for each option side by side.

Sensitivity analysis

This is the part most decision matrices skip, and it’s genuinely the most useful output. After ranking the options, the tool tests each criterion weight: how much would this weight need to change for a different option to win? If Career Opportunities weight would need to drop from 9 to 4 before Vancouver overtakes Barcelona, your result is robust. If it only needs to drop by 1 point, you should probably revisit both your weights and your scores before deciding.

The sensitivity report uses four confidence labels: Moderate Confidence (result holds, but the leader isn’t commanding), Score Flip (a specific criterion score change would change the winner), and Weight Sensitivity (a specific weight change would change the winner). If multiple flip conditions appear, the decision is genuinely close and deserves more thought rather than more data entry.

The research behind weighted decision matrices

The weighted decision matrix sits within a broader field called multi-criteria decision analysis (MCDA), which has been studied seriously since the 1960s. The core finding across decades of research is consistent: when a decision involves multiple conflicting criteria and people with differing values, structured scoring methods produce better outcomes than unstructured deliberation. The act of writing down your criteria and weights does more cognitive work than the math that follows.

The method draws on work by researchers including Thomas Saaty (whose Analytic Hierarchy Process formalised weight assignment) and decades of applied operations research in manufacturing, policy, and clinical settings. The Pugh Concept Selection matrix, a variant used extensively in product engineering, follows the same logic.

What the research also shows is that people reliably overweight vivid, emotionally salient criteria and underweight slow-building ones like long-term cost or future flexibility. Assigning explicit weights before scoring forces you to commit to your priorities on paper, which makes those hidden biases visible. You don’t need a perfect weighting scheme – you need one that’s honest enough to be worth arguing with. The sensitivity analysis then tells you which arguments are actually worth having.

Who gets the most from this tool

This option comparison tool is a good fit in four situations:

You’ve already narrowed to a shortlist but can’t pick. You’ve done the research and eliminated the obvious losers. Two or three options remain and they’re genuinely close. A weighted matrix gives you a structured way to see whether they’re close because the evidence is close, or because you haven’t committed to your priorities yet. (Often it’s the second one.)

You’re making the decision with other people. Individual scoring on the same matrix reveals where people disagree on facts versus values. If two people score an option very differently on a criterion, that’s a factual disagreement worth resolving. If they weight the criteria differently, that’s a values conversation worth having explicitly rather than burying in a gut-feel vote.

You suspect your gut is tracking something other than your stated priorities. If you already know what you want but feel guilty about it, the matrix will either validate your instinct or show you why the math disagrees. Either outcome is informative. The matrix can’t tell you what to want – it can only show you whether what you’re choosing is consistent with what you say you want.

You need to explain or document the decision. Job offers, vendor selections, relocation decisions, investment choices. Anywhere you’ll want a record of why you chose what you chose, a printed or exported matrix is a clean artefact. The sensitivity analysis section is particularly useful here because it shows the decision was robust, not just convenient.

Related articles

These articles go deeper on the decision-making principles behind this tool:

- Overcoming Analysis Paralysis in Decision Making – if you’ve been stuck in the research loop for weeks and need a way out, this covers the psychology behind analysis paralysis and gives you concrete moves to break the cycle.

- Prioritization Methods Complete Guide – a full survey of structured prioritization frameworks, including when a weighted matrix is the right tool and when simpler methods like MoSCoW or impact-effort grids are a better fit.

- Decision Making Frameworks Guide – compares the major frameworks used by individuals and teams, with guidance on matching the framework to the type of decision you’re actually facing.

What is a weighted decision matrix?

A weighted decision matrix is a structured tool for comparing multiple options against multiple criteria, where each criterion carries an importance weight. You score each option on every criterion, multiply each score by its weight, and sum the results to get a weighted total. The option with the highest weighted total is the mathematical winner. The method is also called a Pugh matrix, criteria-based decision matrix, or weighted scoring model.

How do I choose the right criteria?

Start with the three things that would most change your decision if they turned out differently than expected. Common categories include cost, time, impact, risk, strategic fit, and reversibility. Avoid criteria that apply equally to all your options – they add noise without changing the ranking. Also avoid splitting one criterion into two closely related ones, which artificially inflates its weight.

What does the sensitivity analysis actually tell me?

Sensitivity analysis shows how stable your result is by identifying which weight or score changes would flip the winner. If the top option wins by a large margin across all sensitivity tests, you can decide confidently. If several flip conditions are flagged, the decision is genuinely close and you should either gather more information on the key criteria or accept that either option is a reasonable choice. A fragile result is not a failure – it is useful information.

Can I use this for team decisions?

Yes, and it works particularly well in that context. Have each person complete the matrix independently using the same options and criteria, then compare results. Differences in weighted totals will cluster around two types of disagreement: factual (two people scored the same option differently on the same criterion) and values-based (two people weighted the criteria differently). These are worth separating because they require different conversations to resolve.

How many options and criteria should I use?

The practical sweet spot is 3 to 4 options and 4 to 6 criteria. Fewer than two options does not need a matrix. More than six options should be pre-filtered using a quick qualitative pass before you build the full matrix. More than seven or eight criteria dilutes the weights, makes scoring tedious, and often means you have included criteria that are really sub-components of another criterion.

Is this better than just going with my gut?

For simple decisions with one or two criteria, your gut is probably fine. For decisions with three or more conflicting criteria, research on multi-criteria decision analysis consistently shows that structured scoring outperforms unstructured deliberation – not because the math is magic, but because the process forces you to be explicit about your priorities before you score. The matrix is most valuable not as a replacement for intuition but as a way to check whether your intuition is tracking what you actually said you cared about.

Is my data private and secure?

Yes. All information you enter stays in your local browser storage. Nothing is shared with, processed by, or saved on the Goals and Progress servers or any third-party provider. The trade-off is that clearing your browser cache will erase your data. Some tools include a save and load function so you can export your inputs as a local file and reload them later.

Make the call with something to show for it

The weighted decision matrix won’t make the decision for you, and it’s not supposed to. What it does is get your priorities on paper, score your options against those priorities honestly, and tell you how solid or shaky your result is. That’s the difference between a decision and a guess with extra steps.

Scroll up, start with your decision question, and see where you land. If the winner surprises you, that surprise is worth paying attention to.

This tool is part of the free planning tools collection at Goals and Progress. Each tool targets a specific friction point in the goal-setting and decision-making process, from clarifying a fuzzy problem through to tracking execution.